linux 下hadoop 2.9.2源码编译的坑怎么解决

这篇文章主要讲解了“linux 下hadoop 2.9.2源码编译的坑怎么解决”,文中的讲解内容简单清晰,易于学习与理解,下面请大家跟着小编的思路慢慢深入,一起来研究和学习“linux 下hadoop 2.9.2源码编译的坑怎么解决”吧!

编译过程begin

centos 7 (系统mac上vmware fusion )

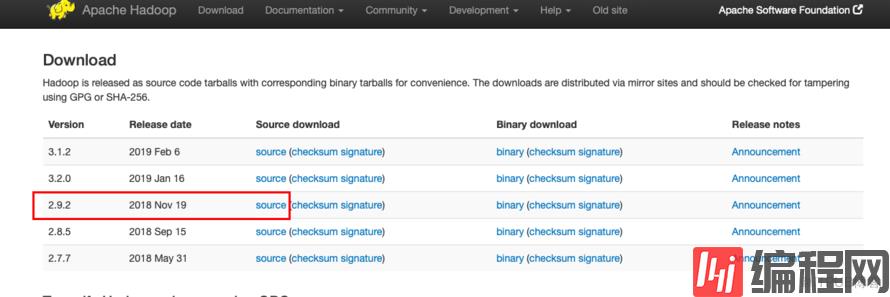

Hadoop下载源码包:(再吐槽一下百度 当你用Hadoop 搜索的时候都是广告,用hadoop稍微好一些,只有出来的第一个是广告了)

2 source 源码包,是目前的稳定版本

解压

做一个简单的规划,创建目录

[root@nancycici bin]# mkdir /opt/sourcecode[root@nancycici bin]# mkdir /opt/software[root@nancycici bin]# cd /opt/sourcecodeshell工具rz上传压缩包

[root@nancycici ~]# rz(当然得先安装了这个工具才能用 :安装命令:yum -y install lrzsz)

[root@nancycici sourcecode]# lshadoop-2.9.2-class="lazy" data-src hadoop-2.9.2-class="lazy" data-src.tar hadoop-3.1.2-class="lazy" data-src hadoop-3.1.2-class="lazy" data-src.tar查看 BUILDING.txt 中编译所需要的条件,根据这些Requirements 逐个进行安装

[root@nancycici hadoop-2.9.2-class="lazy" data-src]# vi /opt/sourcecode/hadoop-2.9.2-class="lazy" data-src/BUILDING.txtRequirements:* Unix System* JDK 1.7 or 1.8* Maven 3.0 or later* Findbugs 1.3.9 (if running findbugs)* ProtocolBuffer 2.5.0* CMake 2.6 or newer (if compiling native code), must be 3.0 or newer on Mac* Zlib devel (if compiling native code)* openssl devel (if compiling native hadoop-pipes and to get the best HDFS encryption performance)* Linux FUSE (Filesystem in Userspace) version 2.6 or above (if compiling fuse_dfs)* Internet connection for first build (to fetch all Maven and Hadoop dependencies)* python (for releasedocs)* Node.js / bower / Ember-cli (for YARN UI v2 building)* JDK 1.7 or 1.8

官网下载的1.8解压配置环境变量

[root@nancycici ~]# mkdir /usr/java[root@nancycici ~]# mv jdk-8u201-linux-x64.rpm /usr/java/[root@nancycici ~]# cd /usr/java/[root@nancycici java]# lltotal 261376-r--------. 1 root root 176209195 Mar 27 18:10 jdk-8u201-linux-x64.rpm[root@nancycici java]# rpm -ivh jdk-8u201-linux-x64.rpm[root@nancycici java]# mv jdk1.8.0_201-amd64 jdk1.8.0[root@nancycici java]# vi /etc/profileexport JAVA_HOME=/usr/java/jdk1.8.0export PATH=$JAVA_HOME/bin:$PATH环境变量生效查看

[root@nancycici java]# source /etc/profile[root@nancycici java]# which java/usr/java/jdk1.8.0/bin/java[root@nancycici java]# java -versionjava version "1.8.0_201"Java(TM) SE Runtime Environment (build 1.8.0_201-b09)Java HotSpot(TM) 64-Bit Server VM (build 25.201-b09, mixed mode)接下来maven

* Maven 3.0 or later[root@nancycici software]# tar -xvf apache-maven-3.6.0-bin.tar[root@nancycici protobuf]# vi /etc/profileexport MAVEN_HOME=/opt/software/apache-maven-3.6.0export PATH=$MAVEN_HOME/bin:$JAVA_HOME/bin:$PATH[root@nancycici protobuf]# source /etc/profile[root@nancycici ~]# mvn -versionApache Maven 3.6.0 (97c98ec64a1fdfee7767ce5ffb20918da4f719f3; 2018-10-25T02:41:47+08:00)Maven home: /opt/software/apache-maven-3.6.0Java version: 1.8.0_201, vendor: Oracle Corporation, runtime: /usr/java/jdk1.8.0/jreDefault locale: en_US, platform encoding: UTF-8OS name: "linux", version: "3.10.0-514.el7.x86_64", arch: "amd64", family: "unix"接下来findbugs(最开始在官网下载了findbugs 3.0.1 编译出现问题后换了1.3.9,还是按building来吧)

* Findbugs 1.3.9 (if running findbugs)[root@nancycici software]# tar -xvf findbugs-1.3.9.tar export FINDBUGS_HOME=/opt/software/findbugs-1.3.9export PATH=$FINDBUGS_HOME/bin:$MAVEN_HOME/bin:$JAVA_HOME/bin:$PATH[root@nancycici protobuf]# source /etc/profile接下来 protocol 这个需要安装* ProtocolBuffer 2.5.0cmake yum直接安装* CMake 2.6 or newer (if compiling native code), must be 3.0 or newer on Mac[root@nancycici software]# tar -xvf protobuf-2.5.0.tar[root@nancycici protobuf-2.5.0]# yum install -y gcc gcc-c++ make cmake[root@nancycici protobuf-2.5.0]# ./configure --prefix=/usr/local/protobuf[root@nancycici protobuf-2.5.0]# make && make install[root@nancycici protobuf]# vi /etc/profileexport PROTOC_HOME=/usr/local/protobufexport PATH=$FINDBUGS_HOME/bin:$PROTOC_HOME/bin:$MAVEN_HOME/bin:$JAVA_HOME/bin:$PATH[root@nancycici protobuf]# source /etc/profile[root@nancycici protobuf]# which protoc/usr/local/protobuf/bin/protoc[root@nancycici software]# cmake -versioncmake version 2.8.12.2剩下的直接yum安装

[root@nancycici software]# yum install -y openssl openssl-devel svn ncurses-devel zlib-devel libtool[root@nancycici software]# yum install -y snappy-devel bzip2 bzip2-devel lzo lzo-devel lzop autoconf automake现在可以编译了(网络很重要)

[root@nancycici software]# cd /opt/sourcecode[root@nancycici sourcecode]# lshadoop-2.9.2-class="lazy" data-src hadoop-2.9.2-class="lazy" data-src.tar hadoop-3.1.2-class="lazy" data-src hadoop-3.1.2-class="lazy" data-src.tar[root@nancycici sourcecode]# cd hadoop-2.9.2-class="lazy" data-src/[root@nancycici hadoop-3.1.2-class="lazy" data-src]# mvn clean package -Pdist,native -DskipTests -Dtar坑来了~~~~

编译时间可以从一小时到 几天。。因为一直各种fail,比如这些

[INFO] Apache Hadoop Auth ................................. FAILURE

[INFO] Apache Hadoop Common ............................... FAILURE

[INFO] Apache Hadoop Common Project ....................... FAILURE

[INFO] Apache Hadoop HDFS Client .......................... FAILURE

没有记录具体的报错,但是大部分是由于网路问题连不上amazon的库,一些重新编译之后能过,一些反复在一个位置卡住的时候可能有问题,Apache Hadoop Auth Apache Hadoop Common 这个位置反复fail查看报错猜测好像是有一些包没有装好,期间yum重装了 gcc gcc-c++ 发现之后确实没安装成功,这些包可以尝试一下,安装后一定要看到成功。

期间还出现maven的相关报错,

[ERROR] Failed to execute goal org.apache.hadoop:hadoop-maven-plugins:3.1.2:

大概是这样,环境变量中加上

export MAVEN_OPTS="-Xms256m -Xmx512m"

最后这几个都过了。

[INFO] Apache Hadoop Amazon Web Services support .......... FAILURE

这个卡了很久 ,确认不是网络问题了

Failed to collect dependencies at com.amazonaws:DynamoDBLocal:jar:[1.11.86,2.

其中这个报错出现很多次

参考亚马逊的网站

https://docs.aws.amazon.com/amazondynamodb/latest/developerguide/DynamoDBLocal.Maven.html

以及参考博客

https://blog.csdn.net/galiniur0u/article/details/80669408

To use DynamoDB in your application as a dependency:

Download and install Apache Maven. For more information, see Downloading Apache Maven and Installing Apache Maven.

Add the DynamoDB Maven repository to your application's Project Object Model (POM) file:

<!--Dependency:--><dependencies> <dependency> <groupId>com.amazonaws</groupId> <artifactId>DynamoDBLocal</artifactId> <version>[1.11,2.0)</version> </dependency></dependencies><!--Custom repository:--><repositories> <repository> <id>dynamodb-local-oregon</id> <name>DynamoDB Local Release Repository</name> <url>https://s3-us-west-2.amazonaws.com/dynamodb-local/release</url> </repository></repositories>

将dependencies 和repositories加入pom.xml

[root@nancycici hadoop-project]# pwd/opt/sourcecode/hadoop-2.9.2-class="lazy" data-src/hadoop-project[root@nancycici hadoop-project]# lspom.xml pom.xml.bk class="lazy" data-src target[root@nancycici hadoop-project]# vi pom.xml然鹅这个时候还是不行。

这里重新用yum下载了一个Java版本(java-1.8.0-openjdk-1.8.0.102-4.b14.el7.x86_64)重装了Java

[root@nancycici jvm]# pwd/usr/lib/jvm[root@nancycici jvm]# lsjava-1.7.0-openjdk-1.7.0.211-2.6.17.1.el7_6.x86_64 jre-1.7.0-openjdk jre-1.8.0-openjdk-1.8.0.102-4.b14.el7.x86_64java-1.8.0-openjdk-1.8.0.102-4.b14.el7.x86_64 jre-1.7.0-openjdk-1.7.0.211-2.6.17.1.el7_6.x86_64 jre-openjdkjre jre-1.8.0jre-1.7.0最后设置的环境变量

#export JAVA_HOME=/usr/java/jdk1.8.0export JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdk-1.8.0.102-4.b14.el7.x86_64export MAVEN_HOME=/opt/software/apache-maven-3.6.0export PROTOC_HOME=/usr/local/protobufexport MAVEN_OPTS="-Xms256m -Xmx512m"export FINDBUGS_HOME=/opt/software/findbugs-1.3.9export PATH=$FINDBUGS_HOME/bin:$PROTOC_HOME/bin:$MAVEN_HOME/bin:$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$PATH

然后关闭了防火墙

systemctl stop firewalld.service #停止firewall

systemctl disable firewalld.service #禁止firewall开机启动

各种磨难五天,编译成功了

[INFO] Reactor Summary for Apache Hadoop Main 2.9.2:[INFO] [INFO] Apache Hadoop Main ................................. SUCCESS [ 6.019 s][INFO] Apache Hadoop Build Tools .......................... SUCCESS [ 4.249 s][INFO] Apache Hadoop Project POM .......................... SUCCESS [ 4.580 s][INFO] Apache Hadoop Annotations .......................... SUCCESS [ 7.920 s][INFO] Apache Hadoop Assemblies ........................... SUCCESS [ 0.603 s][INFO] Apache Hadoop Project Dist POM ..................... SUCCESS [ 6.075 s][INFO] Apache Hadoop Maven Plugins ........................ SUCCESS [ 11.859 s][INFO] Apache Hadoop MiniKDC .............................. SUCCESS [ 10.455 s][INFO] Apache Hadoop Auth ................................. SUCCESS [ 13.511 s][INFO] Apache Hadoop Auth Examples ........................ SUCCESS [ 7.333 s][INFO] Apache Hadoop Common ............................... SUCCESS [02:09 min][INFO] Apache Hadoop NFS .................................. SUCCESS [ 13.265 s][INFO] Apache Hadoop KMS .................................. SUCCESS [ 19.103 s][INFO] Apache Hadoop Common Project ....................... SUCCESS [ 0.250 s][INFO] Apache Hadoop HDFS Client .......................... SUCCESS [ 34.898 s][INFO] Apache Hadoop HDFS ................................. SUCCESS [01:30 min][INFO] Apache Hadoop HDFS Native Client ................... SUCCESS [ 5.592 s][INFO] Apache Hadoop HttpFS ............................... SUCCESS [ 28.792 s][INFO] Apache Hadoop HDFS BookKeeper Journal .............. SUCCESS [ 13.432 s][INFO] Apache Hadoop HDFS-NFS ............................. SUCCESS [ 7.642 s][INFO] Apache Hadoop HDFS-RBF ............................. SUCCESS [ 41.155 s][INFO] Apache Hadoop HDFS Project ......................... SUCCESS [ 0.096 s][INFO] Apache Hadoop YARN ................................. SUCCESS [ 0.109 s][INFO] Apache Hadoop YARN API ............................. SUCCESS [ 19.196 s][INFO] Apache Hadoop YARN Common .......................... SUCCESS [ 50.308 s][INFO] Apache Hadoop YARN Registry ........................ SUCCESS [ 9.489 s][INFO] Apache Hadoop YARN Server .......................... SUCCESS [ 0.101 s][INFO] Apache Hadoop YARN Server Common ................... SUCCESS [ 17.042 s][INFO] Apache Hadoop YARN NodeManager ..................... SUCCESS [ 18.634 s][INFO] Apache Hadoop YARN Web Proxy ....................... SUCCESS [ 4.028 s][INFO] Apache Hadoop YARN ApplicationHistoryService ....... SUCCESS [ 9.632 s][INFO] Apache Hadoop YARN Timeline Service ................ SUCCESS [ 6.423 s][INFO] Apache Hadoop YARN ResourceManager ................. SUCCESS [ 33.567 s][INFO] Apache Hadoop YARN Server Tests .................... SUCCESS [ 2.233 s][INFO] Apache Hadoop YARN Client .......................... SUCCESS [ 8.271 s][INFO] Apache Hadoop YARN SharedCacheManager .............. SUCCESS [ 5.145 s][INFO] Apache Hadoop YARN Timeline Plugin Storage ......... SUCCESS [ 4.856 s][INFO] Apache Hadoop YARN Router .......................... SUCCESS [ 6.291 s][INFO] Apache Hadoop YARN TimelineService HBase Backend ... SUCCESS [ 10.117 s][INFO] Apache Hadoop YARN Timeline Service HBase tests .... SUCCESS [ 4.253 s][INFO] Apache Hadoop YARN Applications .................... SUCCESS [ 0.062 s][INFO] Apache Hadoop YARN DistributedShell ................ SUCCESS [ 4.325 s][INFO] Apache Hadoop YARN Unmanaged Am Launcher ........... SUCCESS [ 2.326 s][INFO] Apache Hadoop YARN Site ............................ SUCCESS [ 0.106 s][INFO] Apache Hadoop YARN UI .............................. SUCCESS [ 0.049 s][INFO] Apache Hadoop YARN Project ......................... SUCCESS [ 7.882 s][INFO] Apache Hadoop MapReduce Client ..................... SUCCESS [ 0.271 s][INFO] Apache Hadoop MapReduce Core ....................... SUCCESS [ 34.480 s][INFO] Apache Hadoop MapReduce Common ..................... SUCCESS [ 24.368 s][INFO] Apache Hadoop MapReduce Shuffle .................... SUCCESS [ 6.248 s][INFO] Apache Hadoop MapReduce App ........................ SUCCESS [ 11.452 s][INFO] Apache Hadoop MapReduce HistoryServer .............. SUCCESS [ 7.556 s][INFO] Apache Hadoop MapReduce JobClient .................. SUCCESS [ 5.185 s][INFO] Apache Hadoop MapReduce HistoryServer Plugins ...... SUCCESS [ 2.128 s][INFO] Apache Hadoop MapReduce Examples ................... SUCCESS [ 7.005 s][INFO] Apache Hadoop MapReduce ............................ SUCCESS [ 3.082 s][INFO] Apache Hadoop MapReduce Streaming .................. SUCCESS [ 5.188 s][INFO] Apache Hadoop Distributed Copy ..................... SUCCESS [ 9.639 s][INFO] Apache Hadoop Archives ............................. SUCCESS [ 2.798 s][INFO] Apache Hadoop Archive Logs ......................... SUCCESS [ 2.937 s][INFO] Apache Hadoop Rumen ................................ SUCCESS [ 9.550 s][INFO] Apache Hadoop Gridmix .............................. SUCCESS [ 6.652 s][INFO] Apache Hadoop Data Join ............................ SUCCESS [ 3.858 s][INFO] Apache Hadoop Ant Tasks ............................ SUCCESS [ 3.717 s][INFO] Apache Hadoop Extras ............................... SUCCESS [ 4.609 s][INFO] Apache Hadoop Pipes ................................ SUCCESS [ 1.026 s][INFO] Apache Hadoop OpenStack support .................... SUCCESS [ 6.787 s][INFO] Apache Hadoop Amazon Web Services support .......... SUCCESS [ 19.538 s][INFO] Apache Hadoop Azure support ........................ SUCCESS [ 9.503 s][INFO] Apache Hadoop Aliyun OSS support ................... SUCCESS [ 5.122 s][INFO] Apache Hadoop Client ............................... SUCCESS [ 10.356 s][INFO] Apache Hadoop Mini-Cluster ......................... SUCCESS [ 2.316 s][INFO] Apache Hadoop Scheduler Load Simulator ............. SUCCESS [ 9.908 s][INFO] Apache Hadoop Resource Estimator Service ........... SUCCESS [ 7.750 s][INFO] Apache Hadoop Azure Data Lake support .............. SUCCESS [ 14.210 s][INFO] Apache Hadoop Tools Dist ........................... SUCCESS [ 21.479 s][INFO] Apache Hadoop Tools ................................ SUCCESS [ 0.103 s][INFO] Apache Hadoop Distribution ......................... SUCCESS [01:10 min][INFO] Apache Hadoop Cloud Storage ........................ SUCCESS [ 1.427 s][INFO] Apache Hadoop Cloud Storage Project ................ SUCCESS [ 0.078 s][INFO] ------------------------------------------------------------------------[INFO] BUILD SUCCESS[INFO] ------------------------------------------------------------------------[INFO] Total time: 17:00 min[INFO] Finished at: 2019-04-19T09:15:30+08:00[INFO] ------------------------------------------------------------------------[root@nancycici hadoop-2.9.2-class="lazy" data-src]#编译好的包路径:

main: [exec] $ tar cf hadoop-2.9.2.tar hadoop-2.9.2 [exec] $ gzip -f hadoop-2.9.2.tar [exec] [exec] Hadoop dist tar available at: /opt/sourcecode/hadoop-2.9.2-class="lazy" data-src/hadoop-dist/target/hadoop-2.9.2.tar.gz [exec]问题的处理不一定适合每个人,但相关的报错可以试一试

感谢各位的阅读,以上就是“linux 下hadoop 2.9.2源码编译的坑怎么解决”的内容了,经过本文的学习后,相信大家对linux 下hadoop 2.9.2源码编译的坑怎么解决这一问题有了更深刻的体会,具体使用情况还需要大家实践验证。这里是编程网,小编将为大家推送更多相关知识点的文章,欢迎关注!

免责声明:

① 本站未注明“稿件来源”的信息均来自网络整理。其文字、图片和音视频稿件的所属权归原作者所有。本站收集整理出于非商业性的教育和科研之目的,并不意味着本站赞同其观点或证实其内容的真实性。仅作为临时的测试数据,供内部测试之用。本站并未授权任何人以任何方式主动获取本站任何信息。

② 本站未注明“稿件来源”的临时测试数据将在测试完成后最终做删除处理。有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341